By Asif Razzaq

Publication Date: 2026-02-20 20:30:00

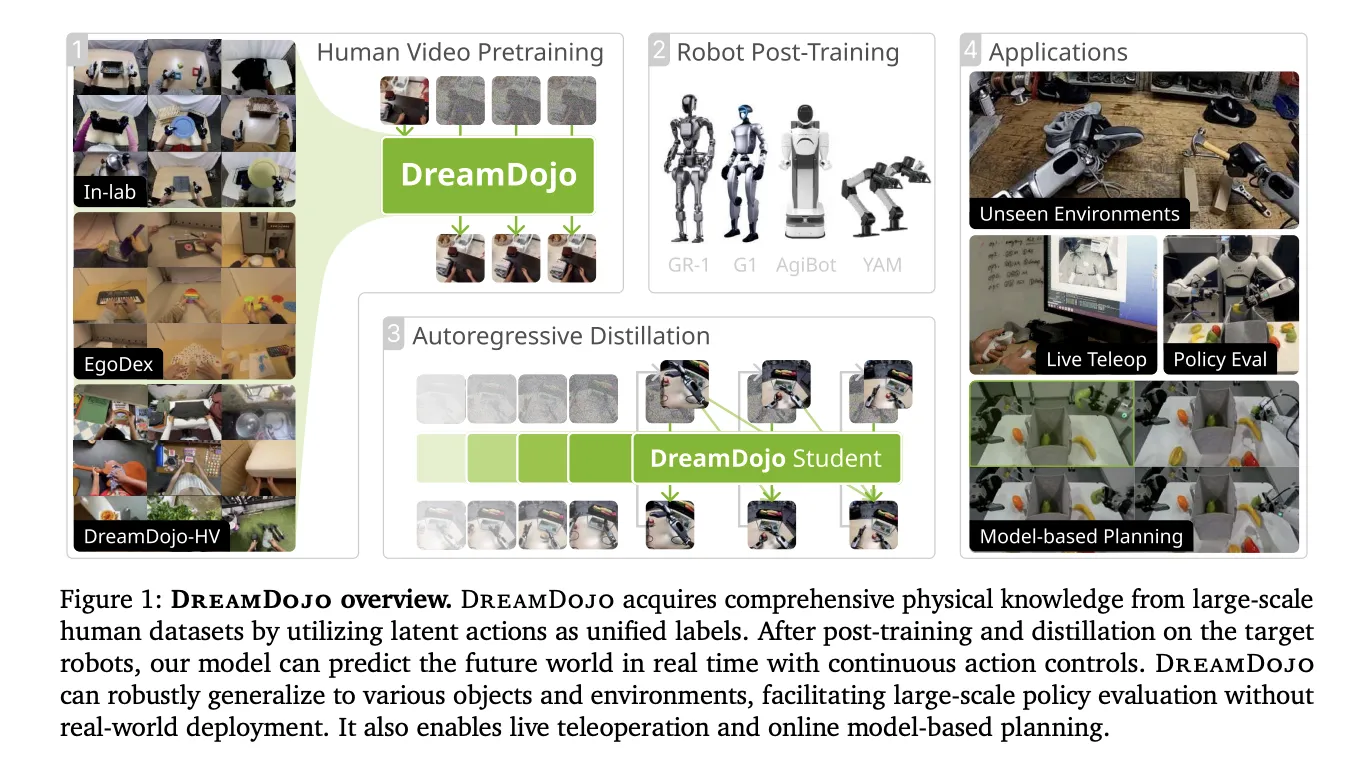

Building simulators for robots has been a long term challenge. Traditional engines require manual coding of physics and perfect 3D models. NVIDIA is changing this with DreamDojo, a fully open-source, generalizable robot world model. Instead of using a physics engine, DreamDojo ‘dreams’ the results of robot actions directly in pixels.

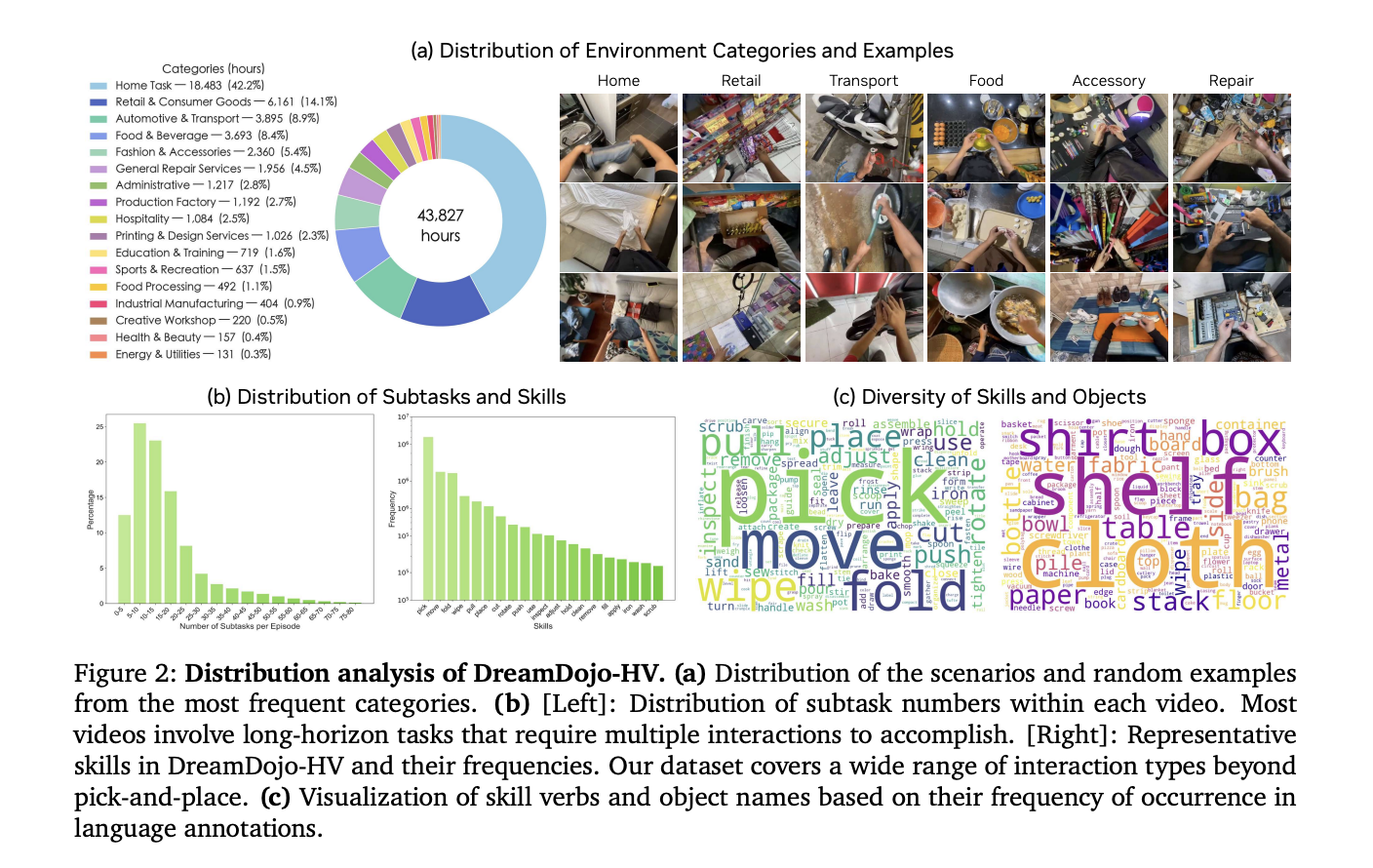

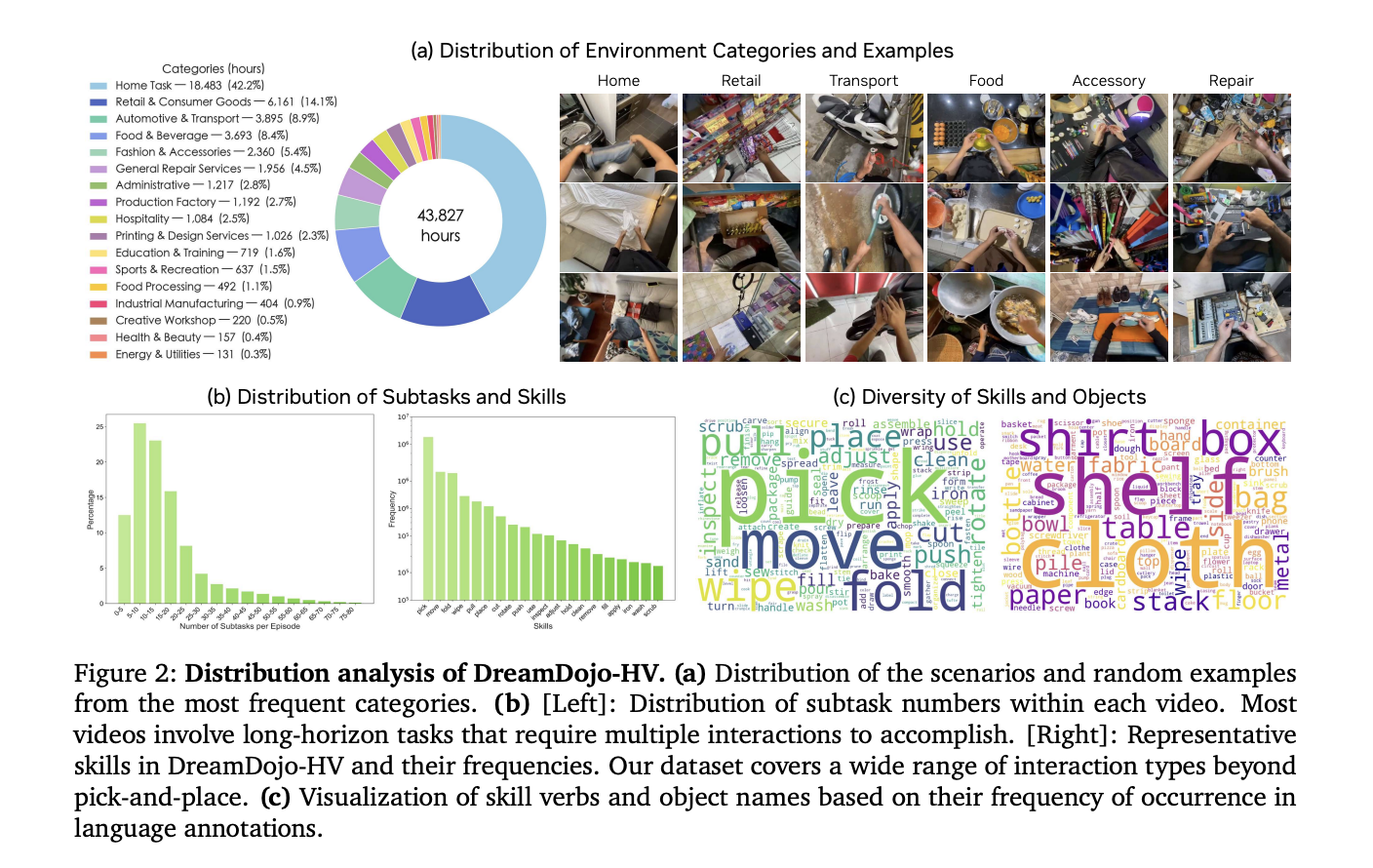

Scaling Robotics with 44k+ Hours of Human Experience

The biggest hurdle for AI in robotics is data. Collecting robot-specific data is expensive and slow. DreamDojo solves this by learning from 44k+ hours of egocentric human videos. This dataset, called DreamDojo-HV, is the largest of its kind for world model pretraining.

- It features 6,015 unique tasks across 1M+ trajectories.

- The data covers 9,869 unique scenes and 43,237 unique objects.

- Pretraining used 100,000 NVIDIA H100 GPU hours to build 2B and 14B model variants.

Humans have already mastered complex physics, such as pouring liquids or folding clothes. DreamDojo uses this human data to give robots a ‘common sense’ understanding of how the world works.

Bridging the Gap with Latent Actions

Human videos do not have robot motor commands. To make these videos ‘robot-readable,’ NVIDIA’s research team introduced continuous latent actions. This system uses a spatiotemporal Transformer VAE to extract actions directly from pixels.

- The VAE encoder takes 2…