By Lin Chai

Publication Date: 2026-01-08 17:28:00

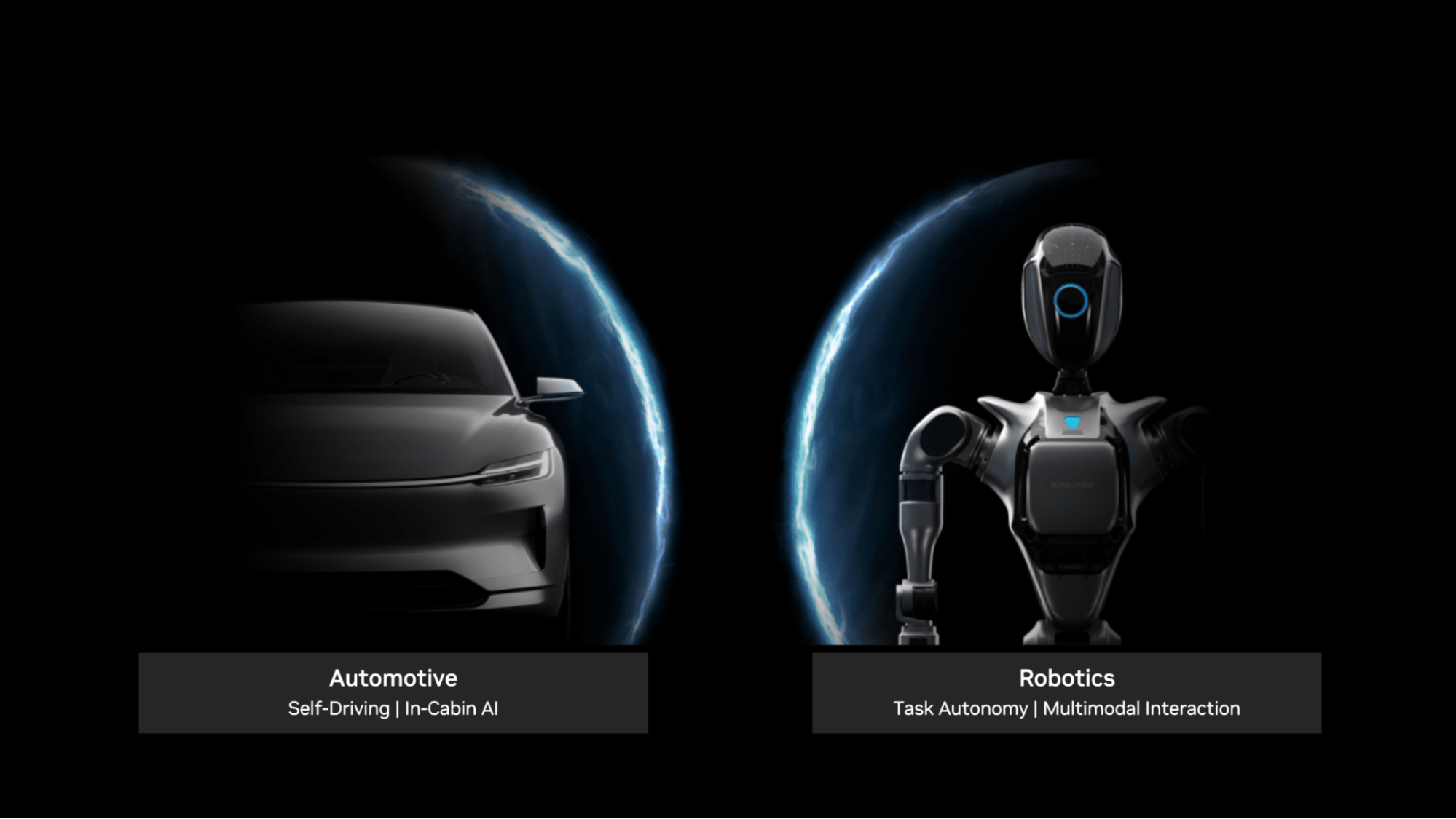

Large language models (LLMs) and multimodal reasoning systems are rapidly expanding beyond the data center. Automotive and robotics developers increasingly want to run conversational AI agents, multimodal perception, and high-level planning directly on the vehicle or robot – where latency, reliability, and the ability to operate offline matter most.

While many existing LLM and vision language model (VLM) inference frameworks focus on data center needs such as managing large volumes of concurrent user requests and maximizing throughput across them, embedded inference requires a dedicated, tailored solution.

This post introduces NVIDIA TensorRT Edge-LLM, a new, open source C++ framework for LLM and VLM inference, to solve the emerging need for high-performance edge inference. Edge-LLM is purpose-built for real-time applications on the embedded automotive and robotics platforms NVIDIA DRIVE AGX Thor and NVIDIA Jetson Thor. The framework is provided as open source on GitHub for the NVIDIA JetPack 7.1 release.

TensorRT Edge-LLM has minimal dependencies, enabling deployment for production edge applications. Its lean, lightweight design with clear focus on embedded-specific capabilities minimizes the framework’s resource footprint.

In addition, TensorRT Edge-LLM advanced features—such as EAGLE-3 speculative decoding, NVFP4 quantization support, and chunked prefill—provide cutting-edge performance for demanding real-time use cases.