By Chris Mellor

Publication Date: 2026-01-06 12:30:00

Nvidia has moved to address growing KV cache capacity limits by standardizing the offload of inference context to NVMe SSDs through its new Inference Context Memory Storage Platform (ICMSP). Announced at CES 2026, ICMSP extends GPU KV cache into NVMe-based storage and is backed by Nvidia’s NVMe storage partners.

In large language model inference, the KV cache stores context data – the keys and values that represent relationships between tokens as a model processes input. As inference progresses, this context grows as new token parameters are generated, often exceeding available GPU memory. When older entries are evicted and later needed again, they must be recomputed, increasing latency. Agentic AI and long-context workloads amplify the problem by expanding the amount of context that must be retained. ICMSP aims to mitigate this by making NVMe-resident KV cache part of the context memory address space and persistent across inference runs.

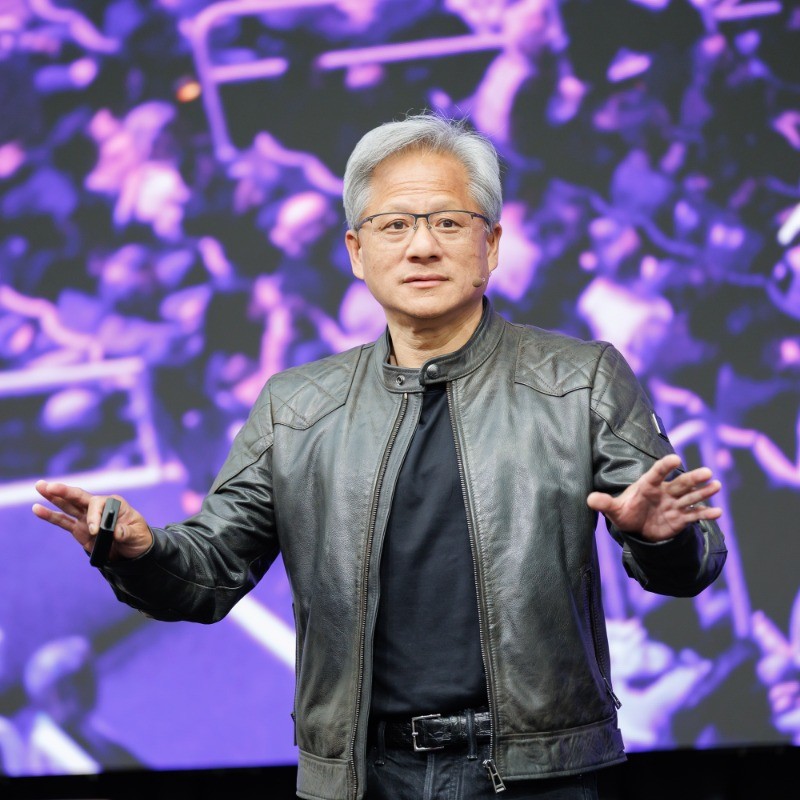

Nvidia CEO and founder Jensen Huang said: “AI is revolutionizing the entire computing stack – and now, storage. AI is no longer about one-shot chatbots but intelligent collaborators that understand the physical world, reason over long horizons, stay grounded in facts, use tools to do real work, and retain both short and long-term memory. With BlueField-4, Nvidia and our software and hardware partners are reinventing the storage stack for the next frontier of AI.”

During his CES…

:max_bytes(150000):strip_icc()/GettyImages-2196355243-f987c07d630f48ae88eabc37f2ccdc73.jpg?resize=1500,1000&ssl=1)